Why Measuring Multiple Dimensions Prevents Dysfunction

You know what happens when a team only tracks velocity? They game velocity. Points inflate, stories get split into tiny slivers, and everyone celebrates higher numbers while actual delivery quality tanks. This isn't a people problem — it's a measurement problem. Goodhart's Law: when a measure becomes a target, it ceases to be a good measure. The six dimensions framework is basically a hedge against Goodhart. By tracking Quality, Responsiveness, Predictability, Productivity, Flow, and Value simultaneously, you make it really hard to game one metric without obviously hurting another. The DORA research (from Forsgren's Accelerate) found that high-performing orgs excel across multiple metrics at once — they don't trade them off. And here's what I find most useful in practice: the radar shape tells a story that numbers alone can't. A team might report "we shipped 40 stories this sprint" and that sounds great. But if the radar shows Productivity at 8 and Quality at 3? That 40-story sprint probably created a mountain of rework that'll eat next sprint alive. The visual makes these trade-offs impossible to ignore, which is exactly why some teams resist adopting it at first.

Deep Dive into Each Performance Dimension

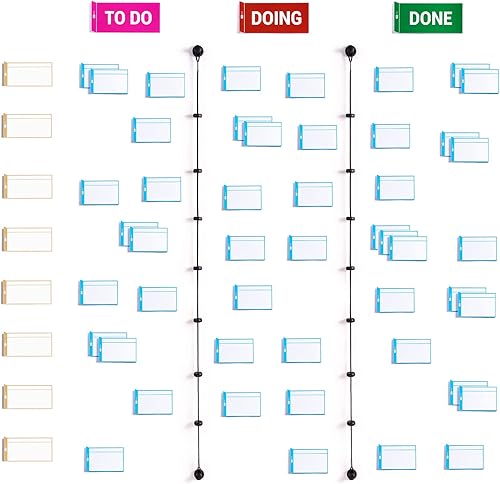

Let me walk through each one, because the definitions matter more than you'd think. Quality: track escaped defects, not just test coverage. A team with 95% coverage that ships bugs constantly has a quality problem the coverage number hides. Responsiveness: how fast do you react when priorities shift or a customer reports something urgent? Measure time-to-acknowledge for incidents and lead time for hot-fix requests. Predictability: this is about trust. Did you deliver what you said you'd deliver, when you said you'd deliver it? Track forecast accuracy and sprint goal completion — not story points completed, which measures output, not reliability. Productivity: tread carefully here. It should measure valuable output, not busywork. Delivered features that customers use, not tickets closed. Flow: how smoothly does work move through your system? Look at WIP age, cycle time distribution, and flow efficiency. High flow means short wait times and clean handoffs. Low flow means items sit in queues forever — even if the team feels busy. Value: the hardest dimension and the one most teams score last (or skip entirely). Are you building things customers actually want? Adoption rates, satisfaction scores, revenue impact. If you nail every other dimension but Value scores low, you're efficiently building the wrong thing.

Facilitating Effective Assessment Sessions

Getting honest scores requires psychological safety. Full stop. If people think low scores will be used against them, they'll inflate everything and the exercise becomes theater. Start by saying — explicitly — that you're measuring the system, not individuals. Then do silent scoring. Everyone rates all six dimensions independently before anyone speaks. This prevents anchoring, where the senior person says "I think Quality is a 7" and suddenly nobody wants to go lower. Reveal scores simultaneously. Where the team mostly agrees — say, everyone scored Flow between 5 and 7 — move on quickly. Where there's a big spread — one person scored Responsiveness as 3 and another as 8 — stop and dig in. That disagreement isn't a problem. It's the most valuable part of the exercise, because it reveals fundamentally different experiences within the same team. Ask for evidence. "What made you score Quality a 4?" Not "why is your score wrong." When you've agreed on consensus scores, pick the one or two lowest dimensions and run a quick root-cause discussion. Five-whys works fine here. But — and I cannot stress this enough — commit to improving only one or two dimensions per cycle. Teams that try to fix everything at once fix nothing. Focused improvement beats scattered effort every single time.

Tracking Progress and Recognizing Patterns Over Time

The real payoff comes after three or four assessments, when you start seeing patterns. A spike pattern — one dimension way higher than the rest — usually means over-optimization. I worked with a team that scored Productivity at 9 and everything else between 4 and 6. They were churning out features at an incredible rate, but quality was suffering, flow was choppy, and stakeholders couldn't predict when anything would land. They'd found a local maximum that felt like success but wasn't. A flat-low pattern — everything below 5 — usually points to something outside the team's control. Insufficient staffing, unclear priorities, organizational churn. That's a leadership conversation, not a team retrospective topic. What you want is a growing hexagon: all dimensions improving gradually over time. Watch for trade-off patterns too. If improving Productivity always drops Quality, you've found a constraint that process tweaks alone won't fix — maybe you need better tooling, or the architecture has a testing bottleneck. And compare across teams when you can. One team's strength in Flow could teach another team plenty, and vice versa. Save everything with dates and notes. Future team members will thank you when they can see three quarters of trajectory instead of starting from scratch (this matters more than you think).